Designing for AI at the Edge in 2026

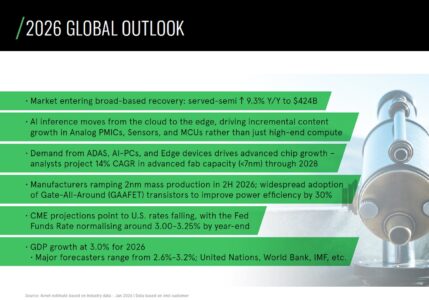

Artificial intelligence investment over the past two years has been concentrated in hyperscale infrastructure. While advanced accelerators, high-bandwidth memory, and leading-edge process nodes continue to absorb significant capital expenditure, Avnet Silica’s Q1 2026 Trendliner report notes that inference capability is increasingly being implemented beyond the cloud. Inference workloads are being deployed across a wider range of systems, driving incremental demand for analog devices, power management ICs, sensors, and microcontrollers.

Figure 1 – 2026 Global Outlook (AI moving beyond hyperscale).

This development reflects the expansion of AI deployment into embedded and endpoint environments rather than a replacement of centralised computing infrastructure. Hyperscale infrastructure remains central to AI development, but growing deployments in vehicles, industrial systems, and connected devices introduce power, thermal, and integration constraints that influence hardware design choices.

For design engineers, the significance lies in how this deployment pattern appears in component demand data. Headline growth figures provide context, but the composition of demand offers deeper insight. The distribution of activity across processing, memory, power, and analog categories reveals how AI functionality is being incorporated into system architectures. Component-level data does not describe design choices explicitly, yet it provides early visibility into where integration effort is increasing and where architectural complexity is evolving.

Integration Rather Than Isolation

The Q1 2026 Trendliner report highlights sustained activity across microcontrollers, programmable logic, analog, power management and memory categories – the last one with the biggest constrains in today’s market. This distribution of demand suggests that AI capability is being incorporated within established embedded architectures rather than deployed primarily as discrete acceleration platforms.

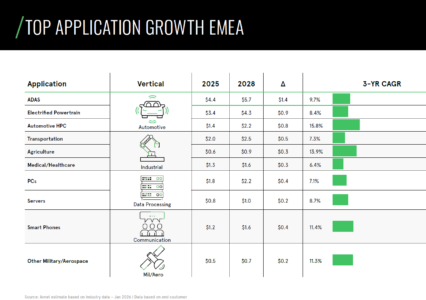

In automotive markets, continued expansion in ADAS and automotive high-performance computing segments indicates increasing compute density within vehicle electronics. These systems operate within domain-controlled and safety-critical environments where perception, control, connectivity, and power management functions coexist. Demand across embedded processing, memory, and supporting analog devices reflects the integration effort required to accommodate inference workloads within those consolidated electronic frameworks.

A similar pattern is visible in industrial and edge-oriented systems. Activity in microcontrollers and programmable logic categories aligns with inference being implemented inside programmable control platforms, machine vision nodes, and connected automation equipment. In these environments, AI capability must share real-time resources, communication bandwidth, and power distribution infrastructure. The breadth of supporting component demand indicates adaptation of existing control architectures rather than the proliferation of isolated AI subsystems.

Figure 2 – Automotive and Industrial applications lead growth in EMEA.

The category profile, therefore, points to inference being embedded within mainstream electronic domains, with increasing system-level complexity.

Memory as a Structural Indicator

The report also indicates continued demand across memory categories, including extended lead times, price adjustments, and allocations in selected DRAM and NAND segments. While these conditions reflect broader market dynamics, they also align with the evolving memory profile of AI-enabled systems.

Inference implemented at the edge does not carry the same scale as data-centre training workloads, but it increases baseline memory requirements within embedded platforms. Model parameters, intermediate buffers, and software stacks share capacity alongside control logic and communication layers.

Continued demand in DRAM and NAND categories suggests that inference workloads are affecting how memory is provisioned in embedded designs. In automotive and industrial systems, AI functions typically share memory resources with control and connectivity software, adding complexity to allocation and sizing decisions. From a design perspective, memory configuration becomes central to system architecture. Component-level demand data provides early visibility into how these requirements are evolving.

Power and Analog Demand in Context

Data from the Q1 2026 report further shows continued activity in power management and analog device categories. These components form the enabling layer that allows inference to operate within real-world constraints.

Embedding AI capability into endpoint systems increases dynamic load variation, peak current demand, and sensitivity to signal integrity. In vehicles and industrial platforms, extended temperature requirements and rigorous validation processes further constrain component selection. Once devices are qualified within these long lifecycle environments, architectural changes become costly and slow to implement. Sustained activity across embedded processing, memory, and supporting power categories is consistent with inference capability being embedded into platforms designed for long-term deployment. Demand across these categories reflects implementation within tightly bounded system envelopes rather than abstract compute environments.

Reading the Demand Composition

The Q1 2026 Trendliner report provides a component-level view of market activity rather than a direct description of architecture. Viewed in context, that activity offers insight into how AI capability is being structured across industries.

Sustained demand in embedded processing, memory, analog, and power categories indicates that inference is being engineered into existing embedded domains. The integration of these components within constrained systems reflects a design environment in which AI functionality coexists with control, connectivity, and sensing.

For engineers designing in 2026, the relevance lies in recognising how this composition of demand aligns with architectural practice. Component trends provide early signals of where AI capability is being embedded and where integration complexity is increasing. Distributed intelligence can be seen in the hardware choices that support it.

By: Thomas Foj, Vice President Supplier Management, Solutions, and Digitalisation EMEA, Avnet Silica

To read the full report go to my.avnet.com