Stratix 10 NX FPGA are AI-optimised, says Intel

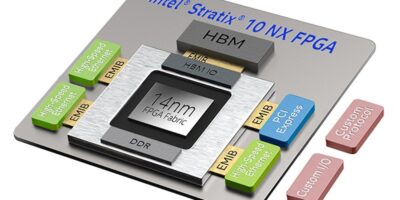

The Intel Stratix 10 NX FPGA include capabilities, such as AI Tensor blocks and hardware programmable AI, to implement customised hardware with integrated, high-performance AI.

The Stratix 10 NX FPGAs high performance AI Tensor blocks are up to 15 times more INT8 throughput than Intel Stratix 10 FPGA DSP block for AI workloads and are hardware programmable for AI with customised workloads. Near compute memory and embedded memory hierarchy for model persistence are also included, together with integrated high bandwidth memory (HBM) and high bandwidth networking with up to 57.8G PAM4 transceivers and hard Ethernet blocks.

The flexible and customisable interconnect can scale across multiple nodes, adds Intel.

The Intel Stratix 10 NX FPGA fabric includes new types of AI-optimised Tensor arithmetic blocks (AI Tensor blocks). These blocks contain dense arrays of lower-precision multipliers typically used in AI applications. The AI Tensor Block’s architecture is tuned for common matrix-matrix or vector-matrix multiplications used in a wide range of AI computations for both small and large matrix sizes. The AI Tensor Block multipliers have base precisions of INT8 and INT4 and support FP16 and FP12 numerical formats through shared-exponent support hardware. All additions or accumulations can be performed with INT32 or IEEE754 single precision floating point (FP32) precision and multiple AI Tensor Block can be cascaded together to support larger matrices.

Other features are speech recognition, speech synthesis, deep packet inspection, congestion control identification, fraud detection, content recognition and video pre and post processing.

The Intel Stratix 10 NX FPGA also incorporate hard intellectual property (IP) such as PCI Express (PCIe) Gen3 x16 and 10/25/100G Ethernet media access control (MAC) / physical coding sub-layer (PCS) / forward error correction (FEC). These transceivers provide a scalable and flexible connectivity solution to adapt to market requirements.